Get Data from workstations and send to an Excel Table for free

Premise

There are times when I encounter a situation where I want to gather some data from workstations and store it in a spreadsheet / table ect. for tracking purposes. Recently I was working with a client to deploy a cloud printing solution. The on prem print environment was somewhat complex with multiple shared printers some of which were locked down to AD security groups. I needed a way to get a pre-deployment printer inventory for each workstation, then compare a post deployment inventory. In came PowerAutomate and PowerShell.

In a previous article, I covered how to use Power Automate to shuttle data from a workstation/server into an Azure Table using Power Automate and the HTTP request trigger. This method is straight forward, easy, and works great but has a catch. The HTTP trigger is a premium feature which requires a $15/MONTH license. How can we accomplish the same result more or less completely for free? This article will explain the method I came up with using PowerShell, the Microsoft Graph and Power Automate.

Getting a list of printers

Getting a list of printers from each workstation is fairly straight forward using PowerShell.

- Use the

Get-Printercmdlet on each workstation to get a list of printers. Filter for only shared printers. - Run the script as the logged on user, so we get the user connections.

- Move the results off the workstation to a central repository.

Lets put it together. First getting the list of printers :

# Get list of installed shared printers

$printers = Get-Printer | where type -eq "Connection"

$printerList = foreach ($printer in $printers){

[pscustomobject]@{

'ComputerName' = hostname

'PrinterName' = $printer.name

'PrinterPortname' = $printer.portname

'Type' = $printer.type

}

$printerJSON = $printerList | ConvertTo-JSON

Setting up the framework to send email using Graph

Alright now how can we get this data into table and trigger a PowerAutomate flow for free? Send Mail with Microsoft graph. Practical 365 has a great article on how to send email with Microsoft Graph using PowerShell This consits of 3 steps

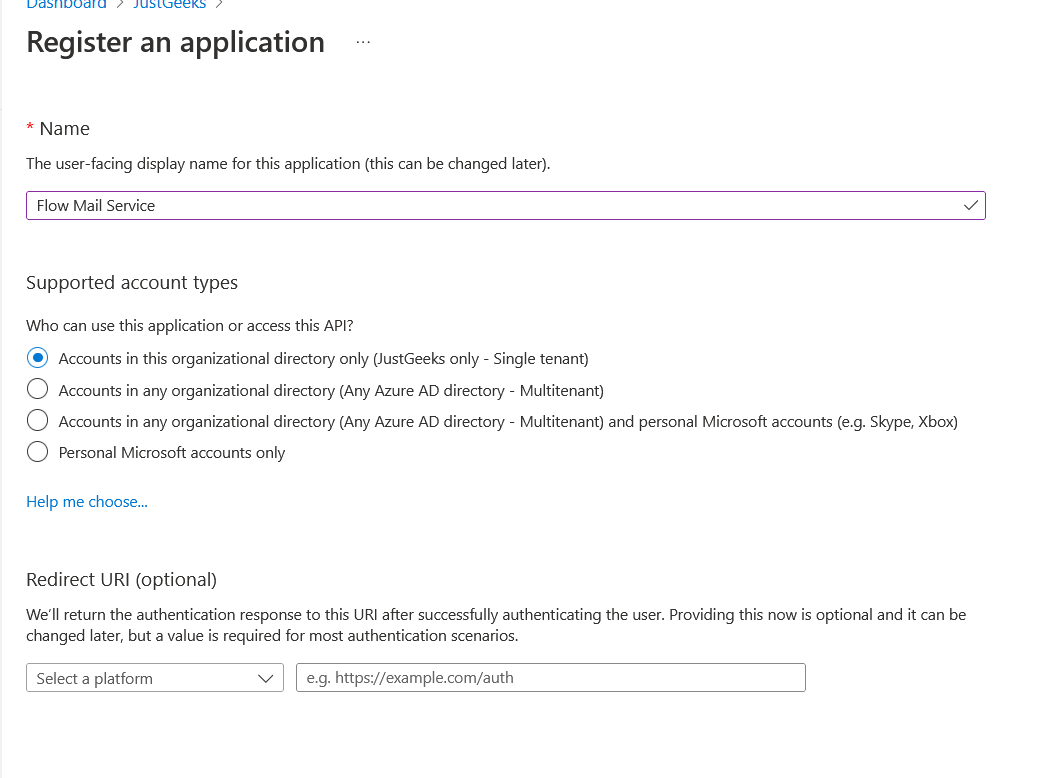

- Create an App registration in AzureAD

- Give the app registration mail.send application rights.

- Create an application secret to use for Authentication.

- Head over to the Azure AD Portal

- Click on Azure Active Directory, then App Registrations

- Click new registration. Give the app a descriptive name

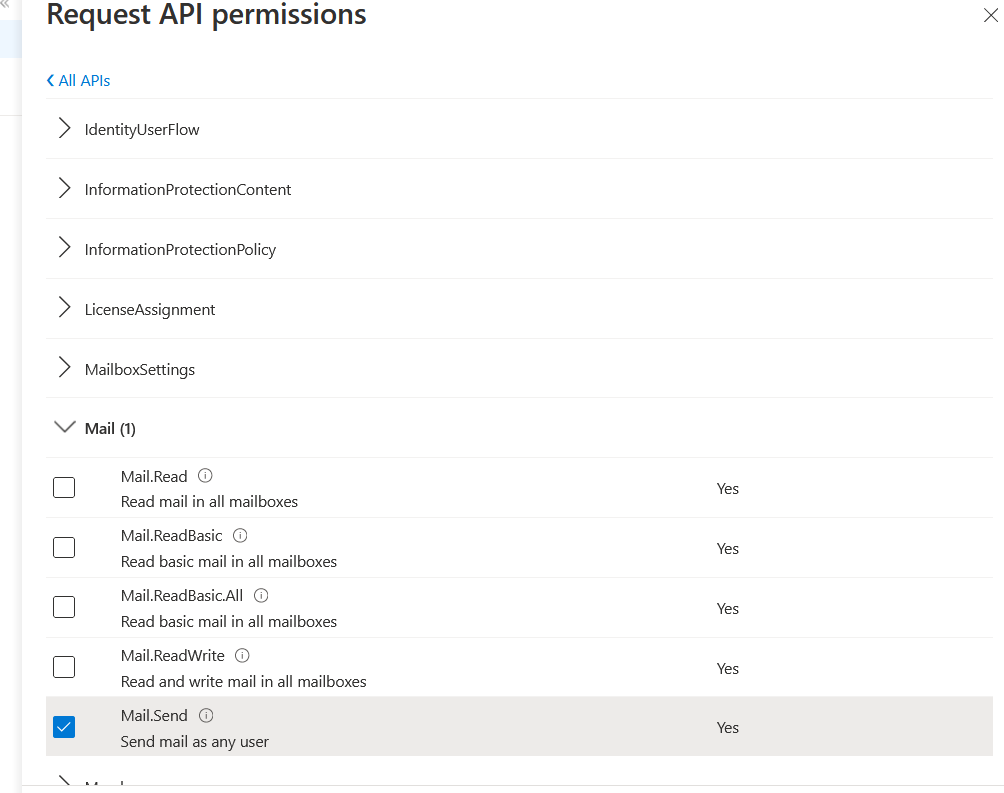

- Under ‘API Permissions’ click app permission. Under Microsoft Graph, Select the application permission ‘Mail.Send’

- Grant Admin Consent.

- Create an app secret

- Go to ‘Certificate and Secrets’

- Click ‘New Client Secret’

- (Optional) Enter a description.

- Copy down the secret value for later

- Go to overview, copy down the tenant ID and app ID for later.

Once we have the app created, we need to prepare exchange:

- Create a shared mailbox for our sending mail account

- Create an application policy to restrict our app from sending as any mailbox other than our service account

- Create a mail enabled security group to apply the application policy to

We can easily do this in PowerShell:

$mailsender = [mailaddress]'flowservice@justgeeks.co'

$appID = 'APP ID you copied down before'

New-Mailbox -Shared -Name $mailsender.user -DisplayName $mailsender.user -Alias $mailsender.user -PrimarySmtpAddress $mailsender.address

New-DistributionGroup -Type Security -Name 'GraphSendAllowed' -Members $mailsender.user # create security group to add graph mail sender to

Set-DistributionGroup 'GraphSendAllowd' -HiddenFromAddressListsEnabled $true # hide from distribution list

New-ApplicationAccessPolicy -AccessRight RestrictAccess -AppId $AppID -PolicyScopeGroupId 'GraphSendAllowed' -Description "Restrict this app's access to flow service account" # apply restriction policy to only allow app send as members of GraphSendAllowed

Send data from each workstation via email

Now that we have a way to send email with the Microsoft Graph, we can craft a PowerShell script to run per workstation to send the data we want via email. We will take the PowerShell snippet from before to get the list of printers. Then add the steps to send an email with graph and attach the list of printers as an attachment in the email. This way we can parse the attachment later for the data we want to store.

# List of printers

$printers = Get-Printer | where type -eq "Connection"

$printerList = foreach ($printer in $printers){

[pscustomobject]@{

'ComputerName' = $env:COMPUTERNAME

'PrinterName' = $printer.name

'PrinterPortname' = $printer.portname

'Type' = $printer.type

}

}

# Convert list to JSON to later parse in PowerAutomate

$json = $printerList | ConvertTo-JSON

#Azure App Registration Variables

$clientID = "125d565f-df97-437a-a5fa-1e119a359a8d"

$Clientsecret = "4e5b5d~lkjjio98LyOOE94i_56daskjllk"

$tenantID = "c7d54f65-0023-4525-a44e-eed2653923ae"

# Mail details

$from = "flowservice@davidjust.com"

$to = "david@davidjust.com"

$subject = "PrinterResults"

#Get an auth token for the GRAPH API using the app registration

$tokenBody = @{

Grant_Type = "client_credentials"

Scope = "https://graph.microsoft.com/.default"

Client_Id = $clientId

Client_Secret = $clientSecret

}

$tokenResponse = Invoke-RestMethod -Uri "https://login.microsoftonline.com/$tenantID/oauth2/v2.0/token" -Method POST -Body $tokenBody

$headers = @{

"Authorization" = "Bearer $($tokenResponse.access_token)"

"Content-type" = "application/json"

}

$URL = "https://graph.microsoft.com/v1.0/users/$from/sendMail"

$bytes = [System.Text.Encoding]::UTF8.GetBytes($json)

$base64 = [System.Convert]::ToBase64String($bytes)

# Craft the JSON POST Body

$body = @"

{

"message": {

"subject": "$subject",

"body": {

"contentType": "Text",

"content": "Results"

},

"toRecipients": [

{

"emailAddress": {

"address": "$to"

}

}

],

"attachments": [

{

"@odata.type": "#microsoft.graph.fileAttachment",

"name": "json.txt",

"contentType": "text/plain",

"contentBytes": "$base64"

}

]

}

}

"@

# Finally, send the email using Graph

Invoke-RestMethod -Method POST -Uri $URL -Headers $headers -Body $Body

Power Automate Flow

Now we have framework from which to send emails through the Microsoft Graph. Now lets move on to creating our Power Automate Flow.

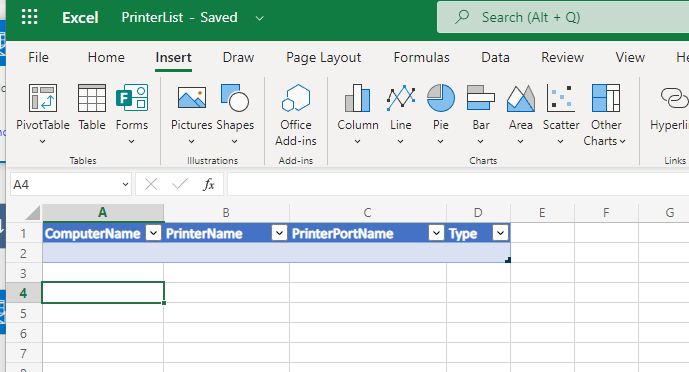

- Before adding creating our PowerAutomate flow, we need to prepare an excel workbook to store results

- Go to portal.office.com and create a blank excel workbook.

- Click insert, table, check my table has headers. Click OK

- Add additional columns and edit each column with the property names you wish to store in the table

- Click File, SaveAs, then rename the workbook and change the location if you wish.

- Login to https://flow.microsoft.com .

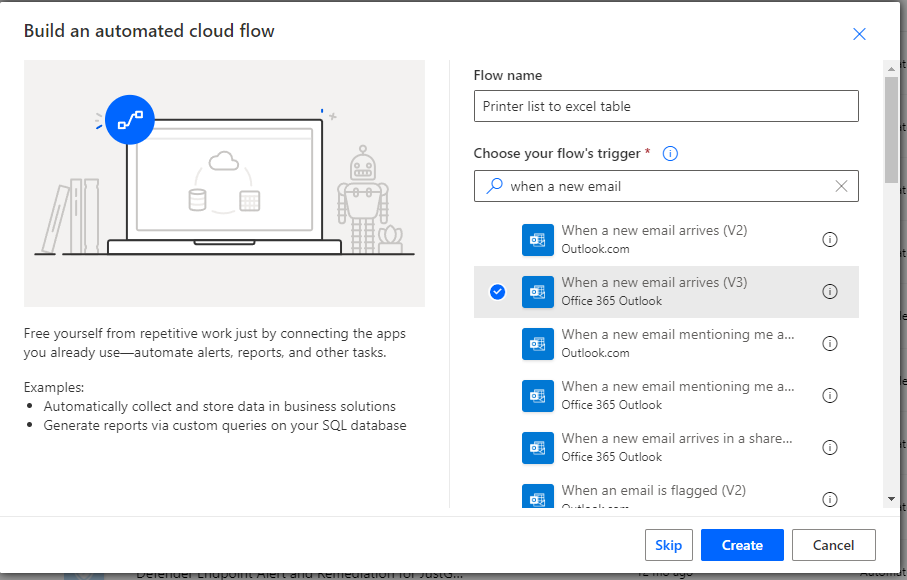

- Created an automated flow with the trigger “O365 When a new email arrives v3”. Enter a name and click create.

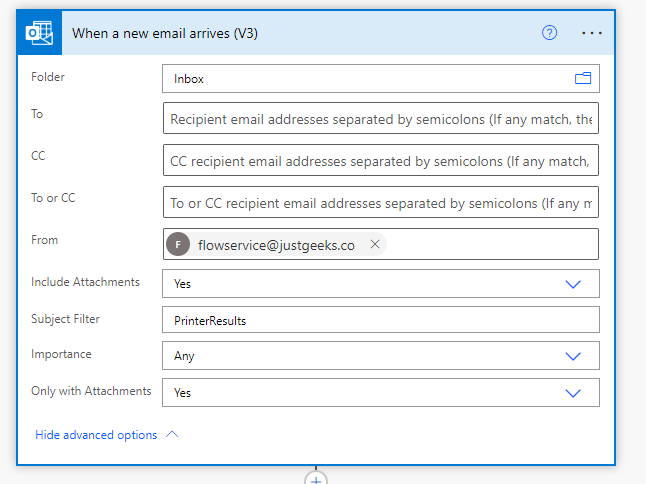

- Expand advanced. Enter the flow service email address in the from field and enter the subject of incoming emails to capture. Select only with attachments. Click new step.

-

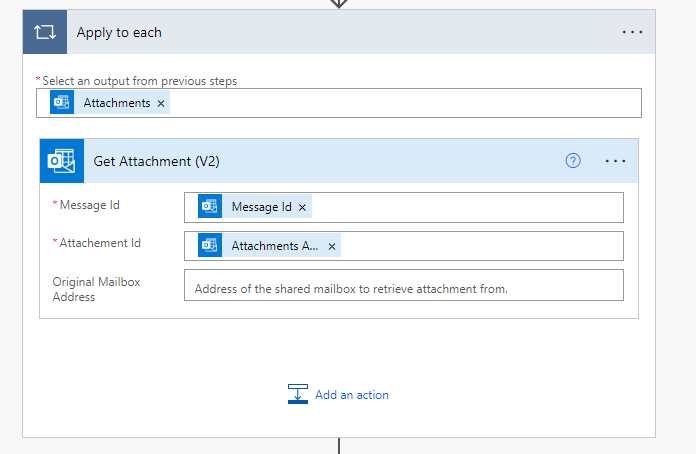

Search for “Get Attachment (V2)”

- Under Message ID, select the dynamic content “Message ID” from our previous step

- For attachment ID, select “Attachments Attachment ID”. This will automatically create an apply to each step.

- Click add an action for the next step

-

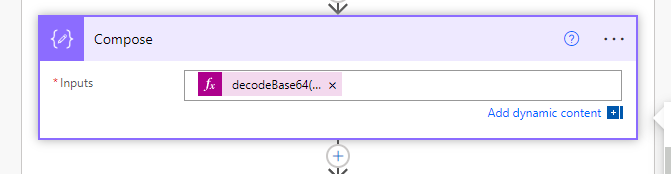

Search for “Compose”. Here we need to enter an expression for inputs. Select expression, then type

decodeBase64(). Click inside the parantheses and under dynamic content, select the dynamic “Content Bytes” to add the content of the attachment. The full expression should look like thisdecodeBase64(outputs('Get_Attachment_(V2)')?['body/contentBytes'])Click add action.

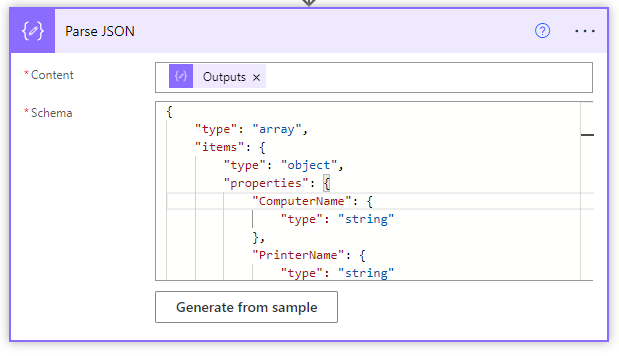

- Search for “Parse JSON”

- In content, select the dynamic content “Outputs” from the compose action.

- For schema, we can simply generate from sample. Open PowerShell, and paste the code for the data we want to generate. Pipe to

ConvertTo-JSONto generate a JSON object. Paste the output into the sample field

$printers =

foreach ($printer in (get-printer)){

[pscustomobject]@{

'ComputerName' = $env:COMPUTERNAME

'PrinterName' = $printer.name

'PrinterPortname' = $printer.portname

'Type' = $printer.type

}

}

$printers | ConvertTO-JSON

-

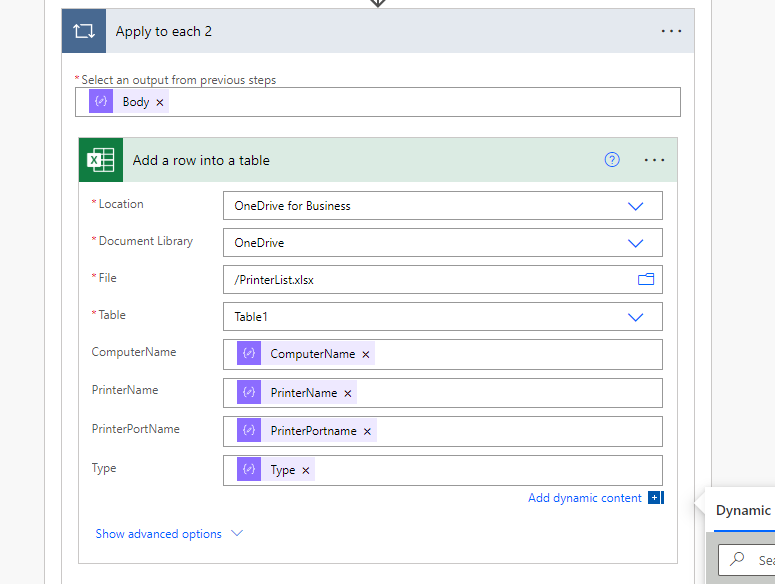

Next add one more action in under apply to each. Search for the action “Add a row into a table (Excel Online)”

- Fill in the fields for location picking the excel workbook you prepared earlier.

- For the table fields, select the dynamic content from the parse JSON action.

- For the final step, add a new step. Search for “Delete an email (v2)”. Add the dynamic content

No save the flow. Go ahead and test the entire PowerShell script. You should see a sucessful run. You can even see the excel table being filled in real time!

Final thoughts

While this isnt the most straight forward or simpllist solution, you cant beat free. I do not like the idea of storing secrets inside scripts, but you can mitigate any risk of misuse by creating the application policy as shown in exchange. Also, when you are done with your script deployment, simply delete the secret inside the app registration. Create a new secret each time you wish to run this flow. If you have any suggestions for improving this flow or another way of doing similar, please let me know!